The Sleepworking Economy

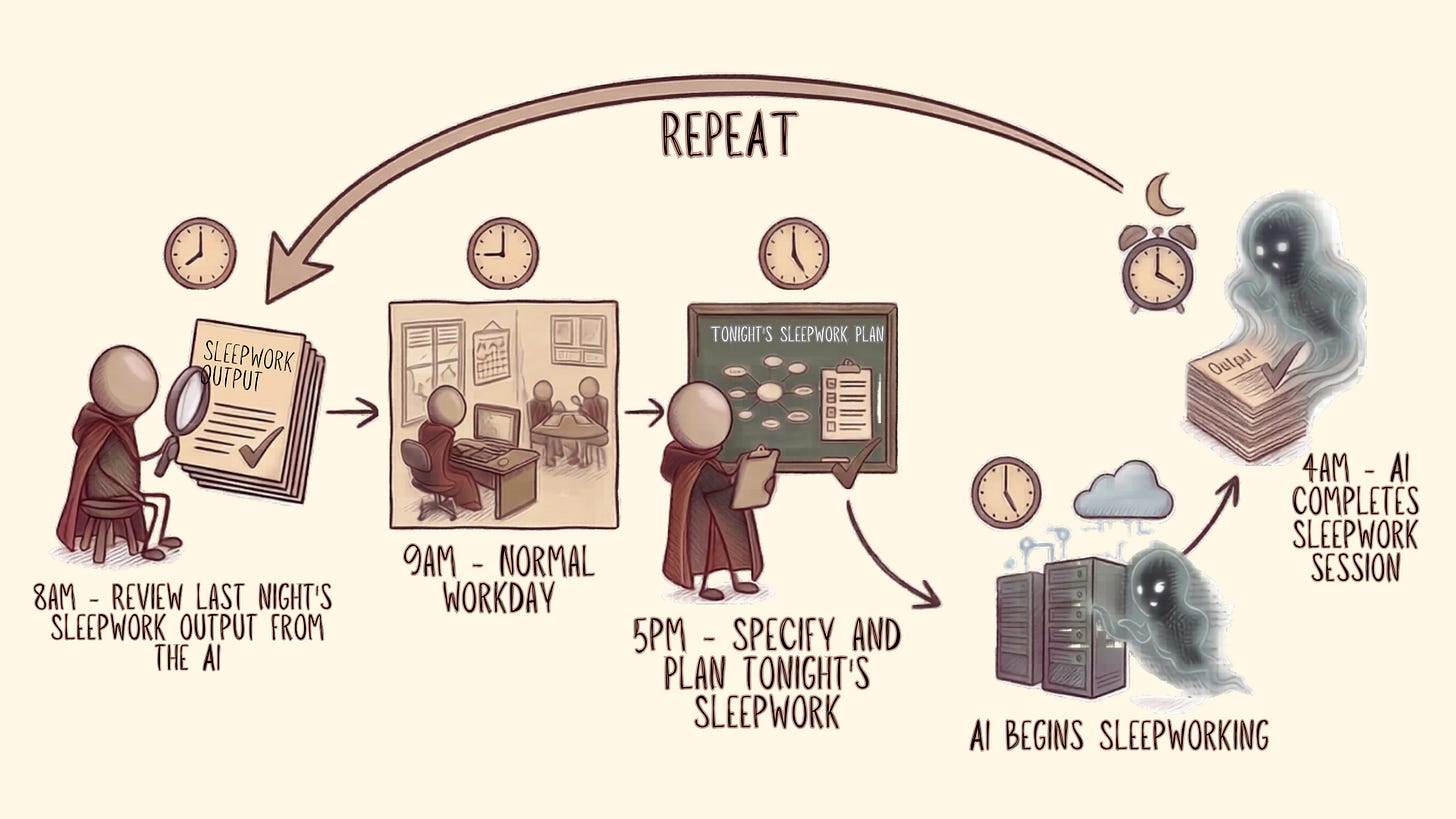

As AI becomes capable of running autonomously for hours on end, the biggest productivity unlock may not be how you optimise your own working hours but what your agents do when you're sleeping.

If you’re a bit of an Economics nerd like me, you may be aware that the correlation between a country’s Average Weekly Hours Worked and a country’s Productivity is quite weak; there are many reasons for this, but the one that’s important to this post is Presenteeism, i.e., the (probably obvious) point that simply being present at work does not guarantee productivity.

I’m sure everyone has worked with a person who (somehow) is able to achieve more in 1 hour than the average person achieves all day and, conversely, people who seem to spend an entire week on something most people can get done before 10am on Monday.

All that being said, most people would agree that being present is at least a pre-requisite for being productive, i.e., nothing gets done if you’re not at work.

And so, typically, people spend their time trying to optimise their working day: streamlining their workflows, shuffling tasks into a more logical order, carving out blocks of “focus time”, etc., etc. That’s all sensible. Don’t stop.

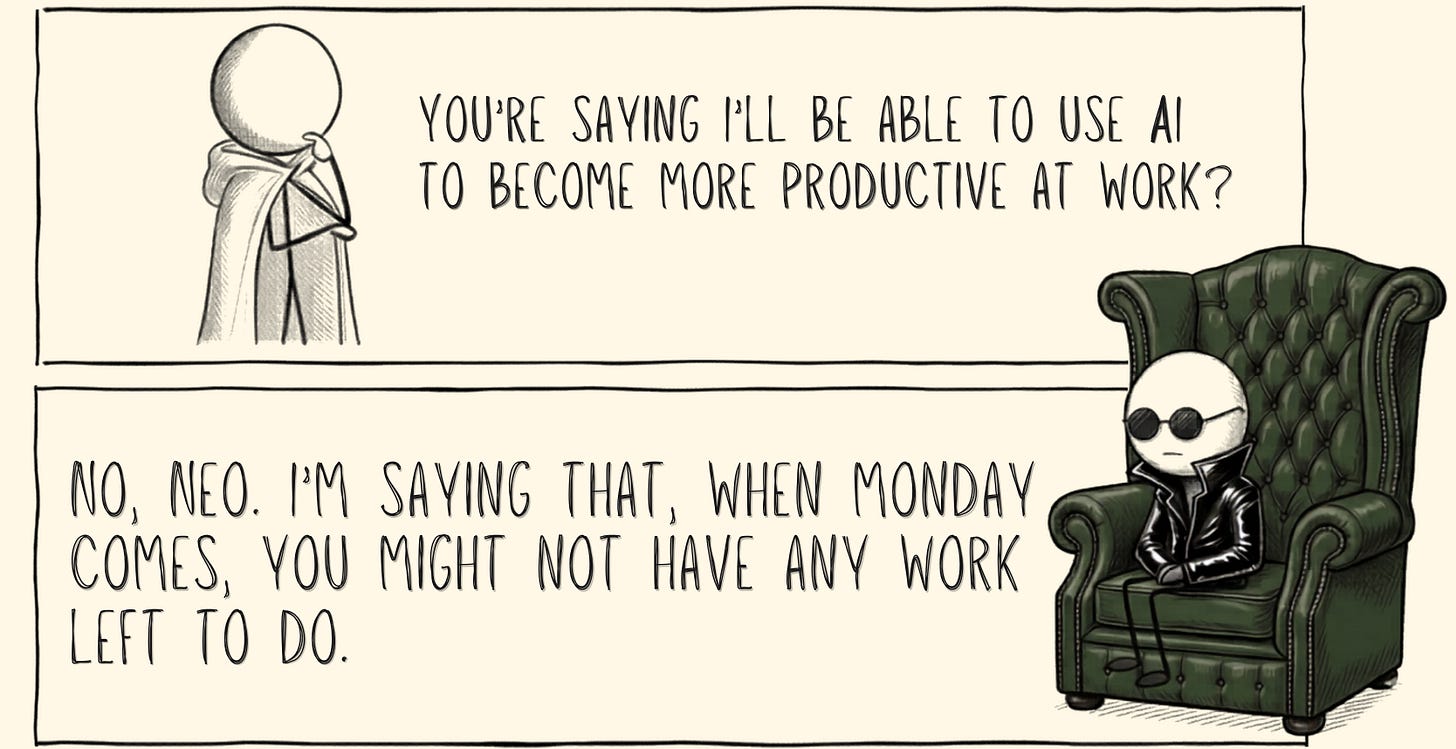

But, as AI “agents” and tools like Claude Co-work become capable of running autonomously for multiple hours at a time, is this really the best way to optimise productivity?

By now, you can probably see where I’m heading with this.

I’m making the case that there is now a better way to quickly increase your productivity: focus on how you get more done when you’re not at work.

Since everyone else has taken all the cool names, I’m going to default to my standard strategy (second-tier wordplay) and call this “Sleepworking”.

Quick Arithmetic

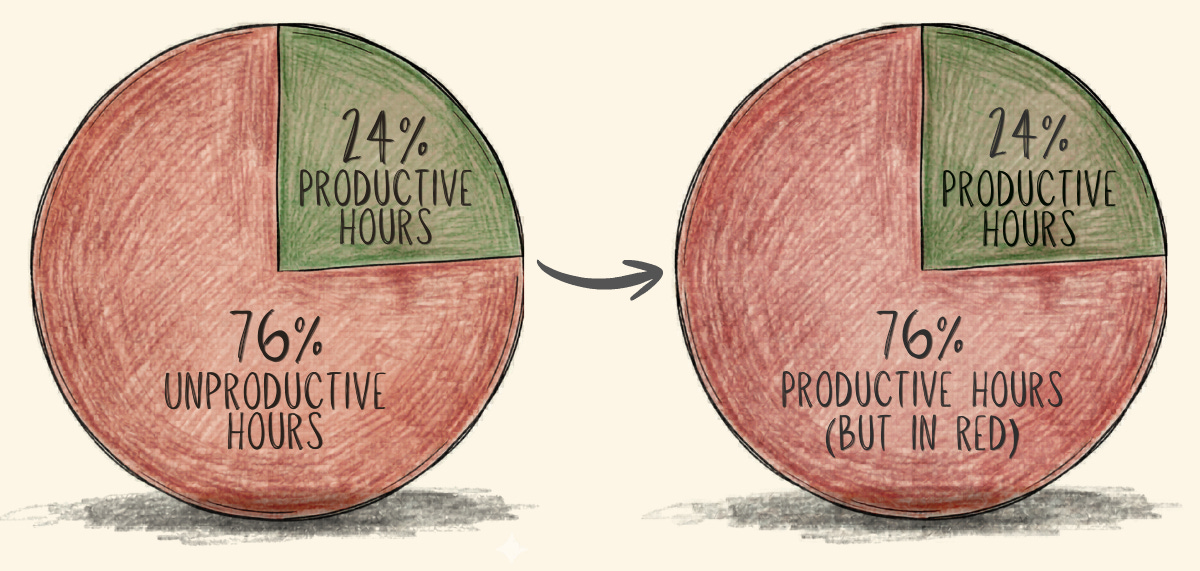

Some quick numbers to set the scene here. These are all approximations; I know everyone will have a slightly different work-life balance, but I believe they represent the majority of full-time workers reasonably well.

A human works ~40 hours per week (outside France).

An earth-week contains 168 hours.

An earth-week contains 128 non-working hours.

76% of the week is made up of non-working hours. So, if you can find a way to convert some/all of that time into productive hours, it will add up (quickly!)

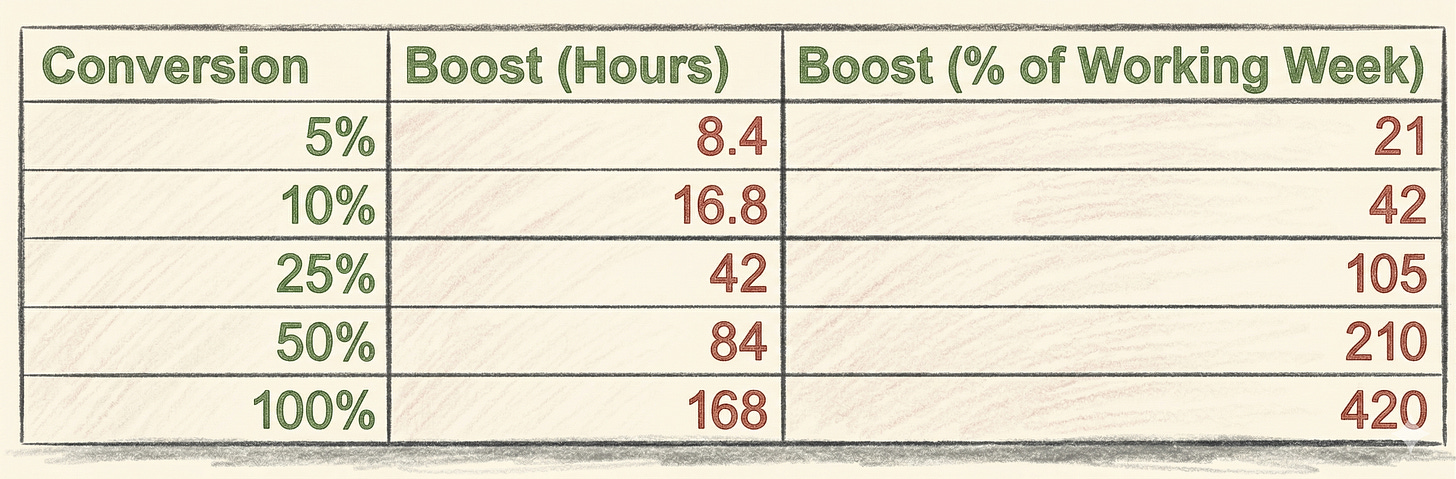

Just to emphasise how big a deal this could be, I’ve shown how big of a boost you get if you manage to successfully convert different proportions of your non-working hours into productive hours.

By Boost, I just mean how many unproductive hours you’ve managed to convert (second column) and how big this is relative to the average forty-hour week (third column).

Note: this is a MASSIVE simplification. It doesn’t factor in the productivity gains that AI gives you during the working day OR the time required to plan/review. It’s still directionally correct IMO.

And, theoretically, since AI agents can work in parallel (something you can’t easily do as an individual), the conversion rates could go beyond 100%. We’ll get to that later, but for now, I think we’re better of targeting a more conservative conversion ratio. Baby steps.

If you can successfully work out how to convert just 5% of your non-working hours into productive hours, you’ll add a full human work day to your output!

Ok, so how do you actually do this?

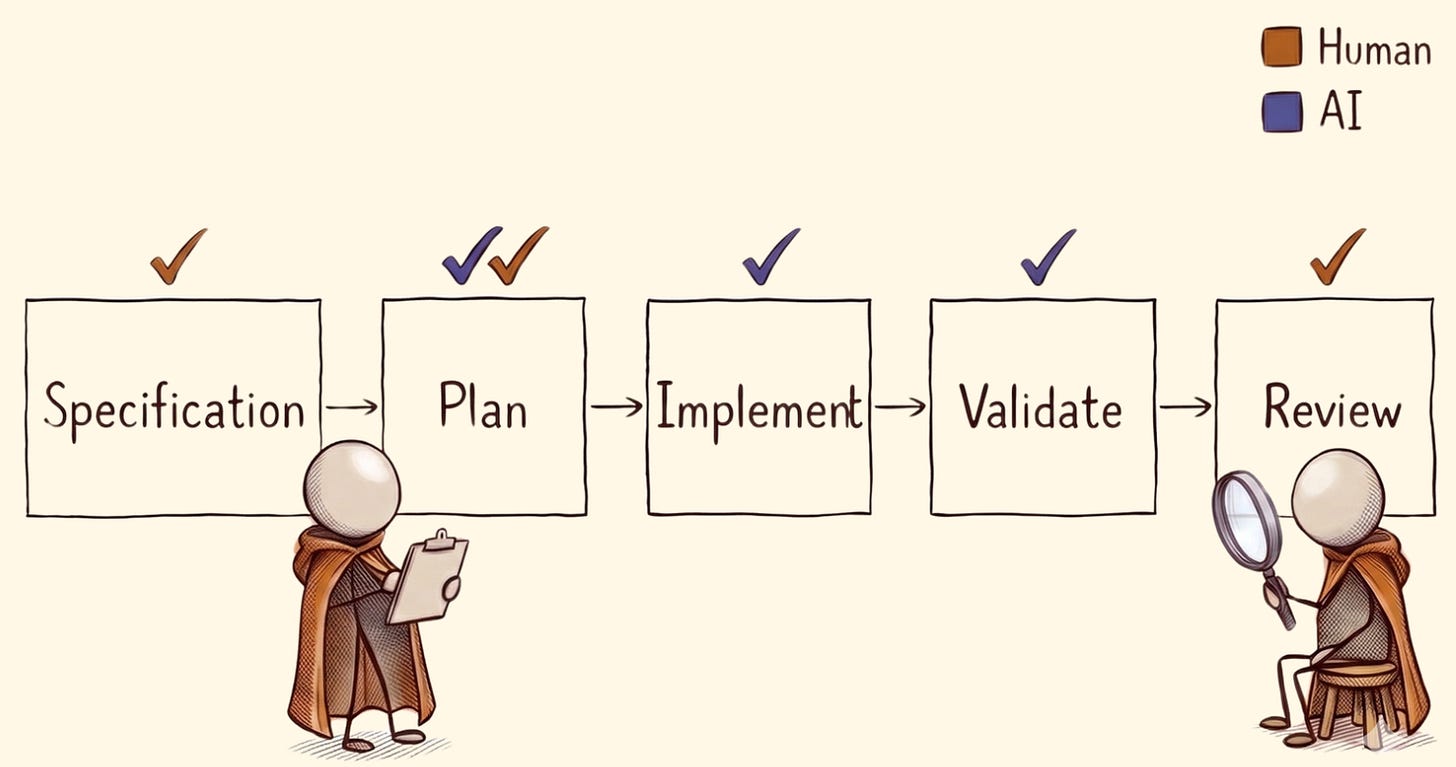

As Balaji so eloquently put it here, AI works middle-to-middle, not end-to-end. In other words, the work that AI probably can’t (or probably shouldn’t) do is usually at the start and end of a process — specifying and reviewing the final outcomes.

Note: I think everyone can (and should) figure this out as quickly as possible; I believe it will feel more natural to you if you’re from a management background and have experience delegating tasks, especially in technical domains.

This is a simple way to think about any significant piece of work…

In later articles, I’ll dive into each of these steps in a bit more detail and create some reusable instructions/prompts to help. For now, you should combine the thoughts below, along with AI and some conversations with your team, to figure this out.

Specification

You describe the required outputs in rigorous detail — the better job you do of this, the better result you’ll get: the content, the structure, the layout, any required conventions, in-house standards or tests, file formats, tone-of-voice, where to get data from, where to put data, colour palette, logic, rules, etc., etc., etc.

Of course, you might use Claude or ChatGPT to help you refine and ideate on this, but ultimately, the human is the one who needs to specify the goals and outputs required.

Plan

Once you know what you’re working on, you need to decide on the how. AI should be involved here, but you still need to review the details before letting it loose. Work with AI to help break down the work into smaller concrete sub-tasks; think about what sources of information or tools will be needed.

Think about any milestones or “checkpoints” where AI should save its current progress. Think about how AI finalises each task and marks progress against the bigger goal.

Remember, the idea here is that you won’t be present, so you also need to provide guidance on what the AI should do if it can’t achieve one of the sub-tasks. Think about how it should log its progress and decisions once it begins implementing the plan.

Most decent agentic tools have a Plan mode now — learn how that works and start there. In my experience, it won’t enable multi-hour runs, but it will get you off the starting blocks.

Implement

With a detailed plan in place and solid guardrails (see next section), you pull the ripcord, stand back and let the AI run. If your plan is well documented, the AI has access to the data, tools and connections it needs, and the guard rails have been well designed, it will should work autonomously to accomplish the goals you’ve laid out.

Validation

This is a BIG one. I think the two biggest reasons people fail to get good results when asking AI to run for long periods of time, in an unsupervised fashion, are:

Incomplete specification

Missing or vague validation processes.

You must provide a concrete set of instructions, rules, tests and standards in a way that AI can apply reliably. Validation should be done regularly at checkpoints along the way — not just once at the end. This keeps the AI on track.

In software engineering, this might be a suite of tests.

In copywriting, it might be in-house style guide, automated grammar and punctuation checking.

For design, it might be analysing image composition or verifying colour choices and font-faces, etc.

I’m not going to list everything here; I can’t. I do plan to create some resources to help people self-serve on this, but for now, just think about what you would do yourself if you had delegated this sub-task to another person and wanted to verify what they’d done before they spent too long going down the wrong track.

Review

You must do this yourself. Of course, you may use AI to assist, but fundamentally, if you care about the final output and want it to be done to a high standard, you are responsible for reviewing it yourself.

Use AI to help you approach the review phase in a sensible, logical order (e.g., you might start at a high-level and work into the lower details). Ask AI to point out important decisions that were made and any trade-offs involved.

It’s OK to spend a LONG time on this because, once you are converting days-worth of unproductive time into value, it’s very easy to justify spending a lot of time reviewing.

You also, obviously, need a way to incorporate the review feedback — this will depend on the feedback itself:

If it’s small, you might edit the work yourself, or ask AI to fix up a few things.

If it’s bigger, you might specify and plan another cycle of work (this time with enhanced guard rails or new requirements based on the review outcomes).

Timeline

This exact approach was inspired by the Elves framework by John Ennis at Aigora. That Elves framework is targeted at software engineering work, but it’s generic enough that it can be reworked for many long-running, autonomous workloads.

What’s Next

I’m going to stop here as there’s a lot to think about here already.

If you’re still using AI in a transactional/conversational style, you must take the time to explore these more autonomous, agentic tools (Claude Co-work, Claude Code, Microsoft Co-work, Manus, Codex etc.).

My goal here is to adapt what I’m seeing in the engineering space and bring it to a wider audience. If you’re a manager or someone with lots of experience delegating, this will be an easier ride, but it will still be a lot to take in.

If you are from a technical background yourself, I’d encourage you to take a look at the following resources as they serve as the basis for this series.

Until next time.

This is so cool, and witnessing the effectiveness first hand through your work!

Thanks for sharing.